For an analytics workload, columnstore indexes provide a world of benefits including storing data in columnar format, underlying high compression, and batch mode execution that increases efficiency of analytical queries.

When bulk loading data into columnstore indexes, it is recommended when possible to plan bulk load batch sizes so that they land up in compressed rowgroups, rather than in the uncompressed delta row store. A batch size of 1,024,00 or greater lands in a compressed rowgroup directly. Another advantage of landing in compressed row groups is that it generates log only for compressed rows, which results in significantly less log being generated.

Ingesting into compressed row groups does mean that the set of rows must be compressed, which requires a memory grant. Here is an example of reduced concurrency due to high memory grants when loading data in parallel into columnstore tables.

In this blog, we will set up and demonstrate that scenario, and then show a way to improve load performance using Query Store hints in Azure SQL Database.

Data load setup

In this example, we are loading data using a Spark job, however that is not a requirement. This could have been 32 parallel bulk load tasks from an application that uses SqlBulkCopy or SQLServerBulkCopy, or from an Azure Data Factory pipeline. We are attempting to optimize data load throughput as follows:

- Batch size of 1,024,576 (~ 1million) is used to maximize compression within each columnstore rowgroup

- 32 parallel bulk load tasks are used (the Spark cluster has 8 nodes, each using 4 cores)

Data load diagnostics

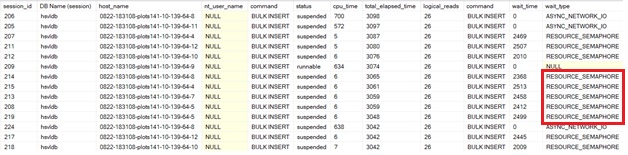

- sys.dm_exec_requests or sys.dm_os_waiting_tasks DMVs shows RESOURCE_SEMAPHORE waits that indicate a wait for a memory grant. There are 10+ sessions that are suspended waiting on a grant.

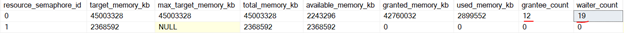

- sys.dm_exec_memory_grants show much higher memory is being granted than being used. This is an example of specific schema where memory grant heuristics err on the side of over-estimation.

- Sys.dm_exec_query_resource_semaphores snapshot in time shows 8 waiters waiting on a grant.

Resolving memory grant contention

There generally are 2 ways to handle this:

- Use the MAX_GRANT_PERCENT query hint to control the maximum grant size. Unfortunately, we cannot do this for the bulk inserts:

- Bulk insert APIs (SqlBulkCopy and SQLServerBulkCopy) don’t have any method to specify a memory grant hint. Spark/ADF/apps loading data commonly use these APIs.

- The BULK INSERT statement also doesn’t support the MAX_GRANT_PERCENT hint.

- Use Resource Governor and change the REQUEST_MAX_MEMORY_GRANT_PERCENT workload group option. Resource Governor isn’t supported in Azure SQL Database at this point.

Query Store hints to the rescue

With the introduction of Query Store hints, we can apply query level hints to queries that cannot be changed in the application. In the case of bulk load, this enables us to add the query level MAX_GRANT_PERCENT hint to the INSERT BULK statement invoked by the bulk insert APIs (not to be confused with BULK INSERT), and control the size of memory grant given to each statement.

To apply Query Store hints to the bulk load statements:

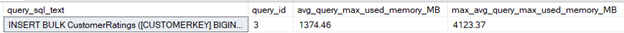

- Identify the query in question. Actual Memory grant can be also validated from actual query plan

SELECT qt.query_sql_text, q.query_id

, avg_query_max_used_memory_MB=round(avg(avg_query_max_used_memory)/128,2)

,max_avg_query_max_used_memory_MB=round(sum(avg_query_max_used_memory)/128,2)

FROM sys.query_store_runtime_stats stats

INNER JOIN sys.query_store_plan qp on qp.plan_id = stats.plan_id

INNER JOIN sys.query_store_query q ON q.query_id = qp.query_id

INNER JOIN sys.query_store_query_text qt on qt.query_text_id = q.query_text_id

WHERE query_sql_text like N'%BULK%' and query_sql_text not like N'%query_store%'

group by q.query_id, qt.query_sql_text

order by avg(avg_query_max_used_memory) desc

2. Apply a lower MAX_GRANT_PERCENT hint to the query, identifying the query by its query_id value from the previous step.

EXEC sys.sp_query_store_set_hints 3, N'OPTION (MAX_GRANT_PERCENT = 5)';

Note: The numeric value in the hint is a percentage of the configured memory grant limit for the workload group, which is 25% of the resource pool memory by default. In that sense, it is a percentage of a percentage. For more information, see KB3107401. You can figure out the approximate value to use in the hint by looking at the granted and used memory amounts in sys.dm_exec_memory_grants when the query executes without any hint, and then adjust it iteratively. Keep in mind that the optimal value may change over time depending on data and schema changes, available resources, etc.

3. View the hint to make sure it is in effect.

SELECT query_hint_id,

query_id,

query_hint_text,

last_query_hint_failure_reason,

last_query_hint_failure_reason_desc,

query_hint_failure_count,

source,

source_desc,

comment

FROM sys.query_store_query_hints

WHERE query_id = 3;

Running data load with the hint in place

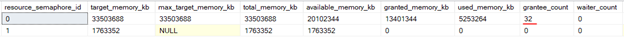

- All 32 bulk insert statements are able to acquire a grant:

- Each individual grant was substantially smaller.

Results

Here is the difference in performance of ingest of 9.6GB of data. By using Query Store hints to reduce memory grant size we elminated the waits for memory grants. This is another useful application of Query Store hints that empower the data engineer when explicit control on the application queries isn’t possible.

|

|

Default config |

With memory grant hint |

|

Load Time (seconds) |

331.2 seconds |

161.4 seconds |

|

Log Generation rate (MB/sec) |

21.5 MB/sec |

49 MB/sec |

|

CPU Usage |

20.3% |

44% |

|

RESOURCE_SEMAPHORE cumulative waits |

4080 seconds |

0 seconds |

Posted at https://sl.advdat.com/2WEogyY