Connect to space satellites around the world and bring earth observational data into Azure for analytics via Microsoft and partner ground stations. Apoorva Nori, from the Azure Space team, joins host Jeremy Chapman to take a first look at the Azure Orbital preview. We’ll show you how it works and how it fits into Microsoft’s strategy with Azure Space to bring cloud connectivity everywhere on earth and to make space satellite data accessible for everyday use cases. Azure Space marks the convergence between global satellite constellations and the cloud. As the two join together, our purpose is to bring cloud connectivity to even the most remote corners of the earth and connect to satellites and harness the vast amount of data collected from space. This can help solve both long-term trending issues affecting the earth like climate change, or short-term real-time issues such as connected agriculture, monitoring and controlling wildfires, or identifying supply chain bottlenecks.

QUICK LINKS:

00:58 — Microsoft’s focus with Azure Space

02:16 — Azure Orbital

04:36 — See it in action

07:04 — Examples of space data readings

08:40 — How it works

10:28 — Use cases for world challenges

11:39 — Everyday use cases

15:36 — Wrap up

Link References:

For more on Azure Orbital, go to https://aka.ms/orbital

Unfamiliar with Microsoft Mechanics?

We are Microsoft’s official video series for IT. You can watch and share valuable content and demos of current and upcoming tech from the people who build it at Microsoft.

- Subscribe to our YouTube: https://www.youtube.com/c/MicrosoftMechanicsSeries?sub_confirmation=1

- Join us on the Microsoft Tech Community: https://techcommunity.microsoft.com/t5/microsoft-mechanics-blog/bg-p/MicrosoftMechanicsBlog

- Watch or listen via podcast here: https://microsoftmechanics.libsyn.com/website

Keep getting this insider knowledge, join us on social:

- Follow us on Twitter: https://twitter.com/MSFTMechanics

- Follow us on LinkedIn: https://www.linkedin.com/company/microsoft-mechanics/

Video Transcript:

- Up next, we’re joined by Apoorva Nori from the Azure Space team to take a first look at the Azure Orbital preview that allows you to connect via Microsoft and partner ground stations to satellites around the world, and bring Earth observational data into Azure for analytics. Now, we’re going to show you how it works and how it fits into Microsoft strategy with Azure Space to bring cloud connectivity everywhere on Earth, and to make space data more accessible for everyday use cases, like supply chain logistics to reduce bottlenecks. So Apoorva, thanks so much for joining us today on the show, and congrats on our upcoming Azure Orbital preview launch.

- Thanks, Jeremy. It’s a pleasure to be here and to share this latest milestone with everyone.

- So Azure Space was announced just a little over a year ago. And this included Azure Orbital, our managed ground station as a service as part of the Azure Space portfolio. In fact, the upcoming Orbital preview really marks a major step forward in the strategy. But before we get our hands on it and show it in action, can you share more context on Microsoft’s broader focus with Azure Space?

- Yeah, sure. So Azure Space really marks the convergence between the many global satellite constellations and our cloud. So on the one hand, the space ecosystem is rapidly expanding with multiple private and public satellite operators observing and connecting the Earth from multiple different orbits. And then on the other hand, with Azure, we have a massive global cloud network with unlimited elastic compute and expansive analytics capabilities. And as we begin to bring the two together, our purpose is to empower you by bringing cloud connectivity to even the most remote corners of Earth. And we can connect to satellites and harness the vast amount of data collected from space. And just think about what this can help us with. This can help solve both long-term trending issues affecting the earth like climate change, or more short-term, real-time issues. So that could be things like connected agriculture, monitoring and controlling wildfires, identifying supply chain bottlenecks, or any number of areas that to date just may not have been possible at global scale.

- Okay, so we’re taking a comprehensive multi-satellite operator, multi-band, and also multi-orbit approach to make cloud connectivity and space data ubiquitous. So how does Azure Orbital then fit into the picture?

- Yeah, so Azure Orbital is how we establish contact between the satellite networks and our cloud. And Azure Orbital ground station is a fully managed service. And you saw in our graphic, these are cloud integrated satellite systems with giant antennas that are co-located or directly connected with our data centers. So here you’re seeing our ground station at the West US 2 data center in Quincy, Washington. And this is one of two Microsoft-owned ground stations to date. The other one’s located in Gavil, Sweden, and we’ve got plenty more coming. And we also have partner ground stations that are integrated with Azure. And that really expands our reach considerably. For example, KSAT’s facility in Norway provides more than 100 antennas, and even more around the world in both hemispheres. And we’ve even begun work to integrate USEI and ViaSat’s Real-Time Earth network. In fact, today we have Northern Hemisphere and super Southern Hemisphere coverage. And that’s set to increase significantly with both Microsoft and partner efforts.

- And to be clear, you know, it’s the satellite operators themselves who Azure Orbital is really targeted for.

- That’s right. And it’s made possible really by advances over the last decade. You know, beyond the expected government or military-owned entities, satellite providers are emerging more and more from the private sector in fields like internet and broadcast communications or aerospace. Think of companies like SpaceX with their Starlink internet service constellation, or Airbus who operate the highest resolution satellites on the market. So where Azure Orbital comes in is it gives satellite operators a way to access and analyze their data more efficiently. They can use our ground stations or partner ground stations to uplink and communicate with their satellites to then downlink that data into Azure via our global data center and networking fabric, all without additional backhaul or ingress fees. And, of course, from there, they can take advantage of Azure’s hyperscale compute and analytical services to rapidly gather insights. And also integrate with our productivity stack, including Microsoft 365 and Teams.

- And really, you know, best of all here, as this space data becomes more accessible, like you mentioned earlier, there’s this tremendous downstream opportunity then for Azure customers to use this data in the future to solve specific business needs. So can we see all of this in action?

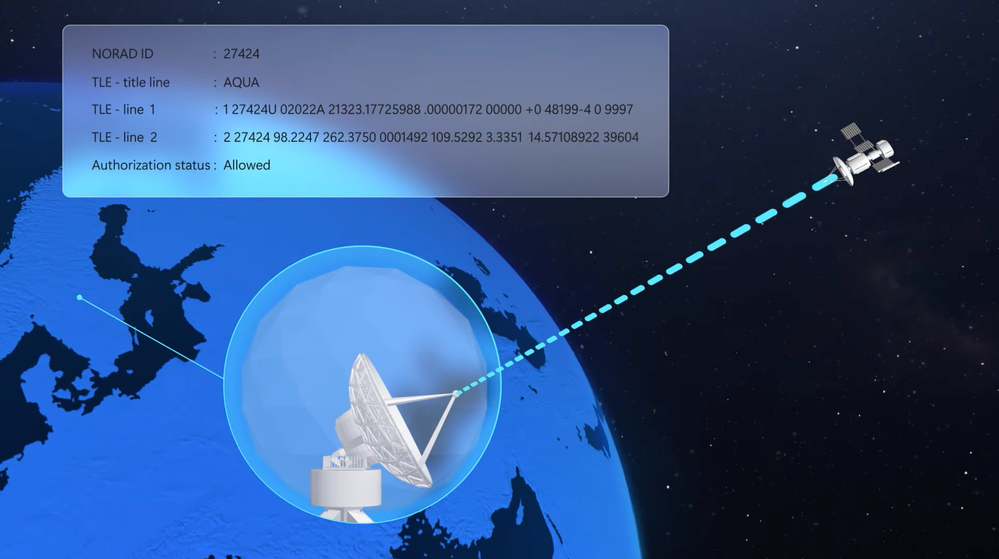

- Yes, you can. So this is different to anything else in the cloud. The really cool part of what we’re doing is that you’re literally able to remotely control the orbital ground station to connect to your satellite fleet as it traverses the Earth’s orbit. Here, you’re looking at the Azure Orbital portal. And this is where as a satellite operator, you can register a spacecraft, such as a satellite, create a contact profile just to define what you want to accomplish and where the data lands. And then once you’ve done those steps, you can schedule contacts to reserve time windows on an antenna for communicating with a satellite. So in my case, I’ve already set up a few contact profiles. And as you can see, I’ve also registered a few spacecraft. Now, if I click into one of these, I’ll use an Earth observational satellite called Aqua from NASA. It’s a public satellite, and it’s always broadcasting on the X-band frequency. Here I can get the contact opportunities by ground station. Now, if I filter based on the ground station that I want, for example, this is a Microsoft ground station, as you can see in the GS owner column. Now, if I filter on this ground station in Svalbard, Norway, you’ll see that it’s owned by KSAT. Now, I want to schedule a contact at this time here. So I’ll select it and hit Schedule. And this triggers the remote movement of an antenna at the ground station to track the satellite as it also moves through orbit. Now, let me explain what makes this contact possible. The spacecraft’s metadata includes its TLE, or its two-line element, which is used with an algorithm to locate a satellite position and trajectory in orbit so that the orbital antenna can point to it. And TLE values change over time. So orbital allows you to update this value as often as you’d like. And that pushes the new updated TLE to every single antenna in the network, including those at partner sites. Now, this is really important in accurately calculating the time windows that you can schedule on each antenna per contact. In fact, with Azure Orbital’s underlying APIs, we’re handling the machine-to-machine operations for you, as well as the data integration with the Azure network backbone and our data services. So this also means that even though we’re connecting via KSAT’s ground station in this case, we’ve set this up for minimal latency. And again, that backhaul to Azure from any of our partner ground stations is included. So you don’t need to pay separately for data ingress.

- Okay, so everything is configured then to allow the ground station to connect with the satellite, then downlink the data and pass it into Azure, but what are some of the examples then of the readings that we’ll get now?

- Yeah, so we get a few different streams of data. Starting in Azure Event Hub, I’ve set up a namespace and a hub to listen for ground station telemetry events during my orbital contact. And I have a consumer script that’s triggered by these events to grab data and append it to my telemetry logs as each new event comes in. Now, I’ve already kicked off the script, and it’s processing the data and adding it to our JSON file every time a value changes. This happens to be telemetry that’s coming in from multiple satellites and multiple antennas. So if we pause and pick this log here, you can see the changing position of the antenna in addition to the RF power, which is just a measure of signal strength. Now, all of these data points allow you to assess how well the satellite’s being tracked, and ultimately how successful the pass is in establishing that contact. And you can also use this information for planning activities for your specific mission. So for example, you might only have a 10-minute window in which to receive and send a finite amount of data to the satellite. And this telemetry can really help you optimize your contact. And then beyond these data points from the ground, we can of course see the data from the satellite as well. The Aqua satellite captures encoded weather imagery data, which once decoded can be viewed. And you can see an example of the output here. You’ll recognize it from weather forecast images that you’ve probably seen. And the key thing here is that all of this is happening in one consolidated view with one set of APIs across multiple satellite networks. So that means if you’re automating your mission, you don’t need to worry about integrating with different networks.

- Okay, so how is this then all working under the hood?

- So there are a lot of components in motion, literally. Remember that all of this is happening while the Earth is rotating, and the satellites are orbiting in various paths and speeds and distances from Earth. So depending on the payload, they can switch between ground stations to follow that satellite feed. And we communicate with satellites over X-, S-, and UHF-bands with even more to come. With the connection established, any data passed into Azure enters over the Azure Orbital virtual network where we process the data. And this gives you direct connection to our Azure network backbone. There, in addition to operations for antenna control and tracking that I just mentioned, we can orchestrate multiple activities, schedule contacts with the spacecraft, monitor inbound telemetry, and then decrypt and normalize inbound data feeds. And once that data enters the Azure data center, as an operator, you have full authority and flexibility on what happens next. When it comes to demodulation and decryption, Orbital provides managed software modems from our marketplace. Equally, we also facilitate the transport of the raw radio-frequency payload data that’s direct to your virtual network in Azure. And that’s where you can use software modems that you may have already built.

- I can see that you also had DIFI, in this case, called out as a standard.

- Yeah, that’s right. So it can be a huge interoperability challenge when it comes to the interpreting of data coming from different equipment providers. So our team at Microsoft co-founded an open standards consortium effort. It’s called DIFI, or Digital Intermediate Frequency Interoperability, for satellite software-defined communication. And this is just to be able to further virtualize the ground segment of satellite communications, and also remove the potential for vendor lock-in.

- Now, with the data more available, can we dig a bit deeper into the use cases for that data?

- Yeah, so anyone can use the Aqua data, but as you start getting into the higher resolution data, there are so many more use cases. For example, we have a partnership with Airbus that has some of the highest resolution imagery available, and that allows us to do some really powerful things. Combining the power of Azure with this satellite imagery ranging from high-resolution 30 centimeters to more than 20 meters, we can create a mapping of inventory to identify wetlands that are at risk of carbon fixation, decomposition, and sequestration. Now, this data can also help provide answers to questions like how wetlands are changing over time. Equally, natural disasters are increasing every year, and they can get out of control if first responders don’t have the freshest data to assess rapidly evolving situations. Azure, combined with the power of high-resolution satellite data, can serve as another set of eyes for firefighters, identifying predictors for a wildfire anywhere in the world from space, and connecting first responders on the ground with the quickest and safest escape routes.

- And so then this is going to really help, I think, with some of the world’s biggest challenges, but what’s the potential then for using space data for maybe some more everyday practical use cases?

- Yeah, so where this can get really powerful is when we combine Azure services like Custom Vision as a part of Azure Cognitive Services. For example, we’re experiencing challenges today with managing container ships and logistics for the free flow of commerce. So here, what you’re seeing is a basic model we’ve trained using aerial photography of container ships. And you can see that the model is accurately assessing the container ships in frame. But because this is from a plane or a drone, the frame and time series data is limited in its altitude and flight time. So now let’s scale this out to establish a way to track thousands of ships over time using similar machine learning models that are running instead using satellite imagery. So here you can see the expanded frame we can track over time from various ports of interest. And I can see, for example, the levels of congestion to predict timing to unload my shipments, or potentially reroute ships to load balance the work across additional ports. Now, behind all of this, I’m using a Jupyter Notebook that’s running in VSCode. The satellite I’m pulling data from is the European Space Agency’s Sentinel-2 Earth observation spacecraft. We’ll start off our script by importing all of the dependencies, which include standard data manipulation, image processing, and some visualization libraries, along with some Microsoft tools for interfacing with Azure Cognitive Services and Planetary Computer, which is part of the Microsoft AI for Earth initiative. Now, here, we’ve already trained a computer provision prediction model, and all you need are a set of credentials to be able to make predictions with it. I can use Planetary Computer’s stack or SpatioTemporal Asset Catalog APIs to isolate some regions of interest out of the massive corpus of data that we’ve collected over time from Sentinel-2. And I’m even filtering here on image quality parameters such as cloud coverage. Now, once I’ve narrowed our search, I can now call Cognitive Services to identify ships and their locations within these Earth observational images. All we’re doing in this cell is using our predictor, making predictions in our images, and logging some of the information like where the ships are, and what the model’s confidence in identifying those ships is. Now let’s take a look at some of the images that we’ve made predictions on, just to get a feel for what the model’s doing. Here, we can see an object that the model is quite confident is a ship. And then here’s a prediction with much lower accuracy, and it’s clearly not a ship. So now that we’ve run all of our images through the ship detection model, we can start to do some analysis on historic trends within this data. And since we’re running a forecasting model, we’ll want to pull in as much data as possible. So I’ve expanded our Sentinel data set to stretch back as far as 2015. And I also want to filter out low-confidence model predictions, so we can more accurately visualize the number of ships in the LA Port harbor over time. Once we have this data, we can start to do standard analysis on time series data by applying techniques like anomaly detection and forecasting. And here, we’re building a really rough forecast just to see what kinds of volumes we might anticipate in the future. While this isn’t a perfect forecast; ideally we’d want to do much more validation on this. We can use it to look at what this preliminary model learned, just to get a high-level sense of some of the patterns in this data set. For example, the forecast breakdown shows a slowly increasing trend in the number of ships, with a strong seasonality component where the number of ships are significantly lower in the months of May and June, but higher in September in October. So what we’ve essentially done here is take large amounts of Earth observational data, and then created time series data out of it to drive these insights and predictions.

- Nice, and I really love the example in this case because we’re using the space data to address a topical problem, I think, that we’re all feeling the effects of today.

- Yeah, thanks. And that’s just one example, Jeremy. With Azure Space and our partner ecosystem of geospatial and data analytics providers like Airbus, Esri, Blackshark, and Orbital Insight, we’re making the data more accessible for Azure customers. And also, on the cloud connectivity side with Azure Orbital, we have the ultimate cloud-enabled edge devices both on and now off the planet. And you can imagine what scenarios this can light up from self-driving cars to media content distribution, and so much more.

- Truly groundbreaking stuff. So what’s next, and where can people watching learn more?

- So whether you’re a space enthusiast or an organization with a spacecraft that wants to find out more, you can visit aka.ms/orbital.

- Thanks so much for doing this today, Apoorva. And also, keep checking back to Microsoft Mechanics for all the latest updates. And be sure to subscribe if you haven’t already. And thank you so much for watching.