Here is a how to article where we explore the use of Azure Percept to improve workers safety and build a proof of concept that connects to a microcontroller to control an alarm. The article describes how to connect Azure Percept to Arduino, set firmware and demonstrates the use of .NET IoT in a docker container.

The importance of Worker Safety

According to OSHA, the most common causes of worker deaths on construction sites in America are the following

- Falls (accountable for 33.5% of construction worker deaths)

- Struck by an object (accountable for 11.1% of construction worker deaths)

- Electrocutions (accountable for 8.5% of construction worker deaths)

- Caught in/between (accountable for 5.5% of construction worker deaths)

Safety is very important on every job site. There are areas the workers must avoid. According to an article from Construction Dive: Elevator-related construction deaths on the rise

“While the number of total elevator-related deaths among construction and maintenance workers is relatively small when compared to total construction fatalities, the rate of such deaths doubled from 2003 to 2016, from 14 to 28, with a peak of 37 in 2015, according to a report from The Center for Construction Research and Training (CPWR). However, falls are the cause of most elevator-related fatalities, just like in the construction industry at large. More than 53% of elevator-related deaths were from falls, and of those incidents, almost 48% were from heights of more than 30 feet.“

Looking at these statistics, it’s being at the wrong place at the wrong time. Warning Signs are typically posted around the dangerous area but can be ignored or forgotten.

What if we can use Azure Percept to monitor these dangerous areas and warn workers to stay away and avoid it. The goal is to have a deterrent, audible sound that reminds them about possible danger. Once worker leave the area and are not detected anymore, the audible sound stops automatically. Also during maintenance period, allow to remotely disable detection with authorizations needed to comply with regulations.

Getting Started

We will look at the Hardware, Software requirements and Architecture diagram for this project. Then walk through step-by-step instructions on how to set up and deploy the application.

Hardware

Azure Percept Device Kit

https://www.microsoft.com/d/azure-percept-dk/8mn84wxz7xww

ELEGOO UNO R3 Super Starter Kit Compatible With Arduino IDE

https://www.elegoo.com/products/elegoo-uno-project-super-starter-kit

Software

Azure Subscription (needed for Azure Percept)

Visual Studio Code

https://code.visualstudio.com/

Azure IoT Tools Extensions for VS Code

https://marketplace.visualstudio.com/items?itemName=vsciot-vscode.azure-iot-tools

Azure IoT Edge Dev Tools

https://github.com/Azure/iotedgedev

Docker with DockerHub account

https://docs.docker.com/get-docker/

Arduino IDE

https://www.arduino.cc/en/software

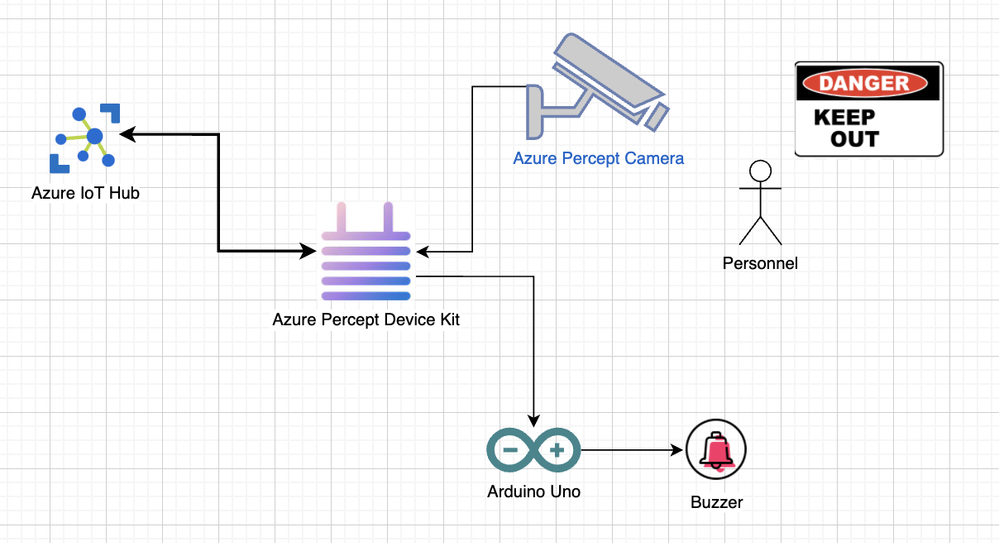

Overal architecture

When the Camera detects a worker that is in the Dangerous Area, an alarm would sound and send telemetry messages to the cloud. Azure Percept Device Kit contains a people detection model that processes frames from the camera. When a worker is detected; it sends a message to the Arduino device to set the buzzer sound and sends the message to IoT Hub.

Instructions

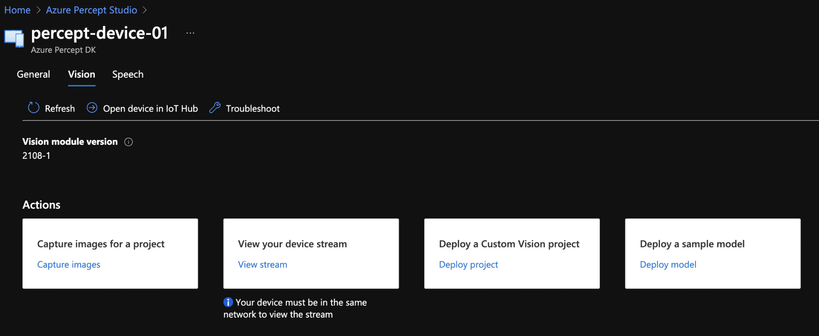

Set People Detection Model

Once the Azure Percept Device Kit is connected to the cloud, we can specify to use People Detection Model by going to Vision Tab -> Deploy a Sample Model

Set up Arduino module

I followed the instructions on .NET IoT github page.

https://github.com/dotnet/iot/tree/main/src/devices/Arduino#quick-start

You can download the Firmata firmware that I used for this project at:

https://github.com/rondagdag/arduino-percept/blob/main/firmata/percept-uno/percept-uno.ino

Here are the steps:

- Open the Arduino IDE

- Go to the library manager and check that you have the "ConfigurableFirmata" library installed

- Open "Percept-Uno.ino" from the device binding folder or go to http://firmatabuilder.com/ to create your own custom firmata firmware. Make sure you have at least the features checked that you will need.

- Upload this sketch to your Arduino.

Send alert to arduino module

Open the Percept Edge Solution project in Visual Studio Code

https://github.com/rondagdag/arduino-percept/tree/main/PerceptEdgeSolution

This module can run locally on your machine if you have Azure IoT Edge Dev tool and also Azure IoT Tools Extensions for VS Code

To run it locally on your machine, you may have to change this module.json

Change repository to your Dockerhub username.

"repository": "rondagdag/arduino-percept-module"

The Arduino Module is a C# application that controls the arduino device. It’s a docker container that uses .NET IoT bindings. Here are the Nuget packages I used.

<PackageReference Include="Microsoft.Azure.Devices.Client" Version="1.38.0" />

<PackageReference Include="Microsoft.Extensions.Configuration" Version="5.0.0" />

<PackageReference Include="Microsoft.Extensions.Configuration.Abstractions" Version="5.0.0" />

<PackageReference Include="Microsoft.Extensions.Configuration.Binder" Version="5.0.0" />

<PackageReference Include="Microsoft.Extensions.Configuration.EnvironmentVariables" Version="5.0.0" />

<PackageReference Include="Microsoft.Extensions.Configuration.FileExtensions" Version="5.0.0" />

<PackageReference Include="Microsoft.Extensions.Configuration.Json" Version="5.0.0" />

<PackageReference Include="System.Runtime.Loader" Version="4.3.0" />

<PackageReference Include="System.IO.Ports" Version="5.0.1" />

<PackageReference Include="System.Device.Gpio" Version="1.5.0" />

<PackageReference Include="Iot.Device.Bindings" Version="1.5.0" />

<PackageReference Include="Microsoft.Extensions.Logging.Console" Version="5.0.0" />

The module tries to connect to two Arduino USB ports. I noticed that sometimes during reboot it can be either one of these ports that the arduino is connected to.

/dev/ttyACM0

/dev/ttyACM1

In order to run this locally on your machine, you may have to change the port number to what Arduino IDE determines UNO is connected to (COM3 for Windows or /dev/ttyS1 for Mac)

It connects to 115200 Baud Rate.

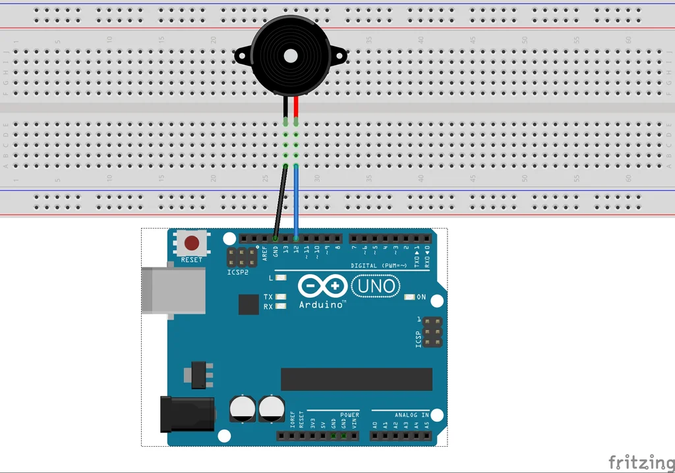

The Buzzer is connected to PIN 12 shown below:

As messages are received from the IoT Edge Hub, it can be processed to detect if a worker has been detected. If the payload contains items in the NEURAL NETWORK node, then we can send the alert to Arduino.

Here’s the code to analyze.

It might be tricky to run this locally on your machine and may need some modifications. You may have to modify deployment template and the code to receive the payload coming from Simulated Temperature.

Notice how the ports are mapped on the docker container. /dev/ttyACM0 and /dev/ttyACM1

"ArduinoModule": {

"version": "1.0",

"type": "docker",

"status": "running",

"restartPolicy": "always",

"settings": {

"image": "${MODULES.ArduinoModule}",

"createOptions": {

"HostConfig": {

"Privileged": true,

"Devices": [

{

"PathOnHost": "/dev/ttyACM0",

"PathInContainer": "/dev/ttyACM0",

"CgroupPermissions": "rwm"

},

{

"PathOnHost": "/dev/ttyACM1",

"PathInContainer": "/dev/ttyACM1",

"CgroupPermissions": "rwm"

}

]

}

}

}

}

Locally the EdgeHub mapping looks like this. The Simulated Temperature sensor is passing the output to the Arduino Module. Then the Arduino Module filters the data, controls the arduino and sends the telemetry up to the cloud.

"$edgeHub": {

"properties.desired": {

"schemaVersion": "1.2",

"routes": {

"sensorToArduinoModule": "FROM /messages/modules/SimulatedTemperatureSensor/outputs/temperatureOutput INTO BrokeredEndpoint(\"/modules/ArduinoModule/inputs/input1\")",

"ArduinoModuleToIoTHub": "FROM /messages/modules/ArduinoModule/outputs/* INTO $upstream"

},

"storeAndForwardConfiguration": {

"timeToLiveSecs": 7200

}

}

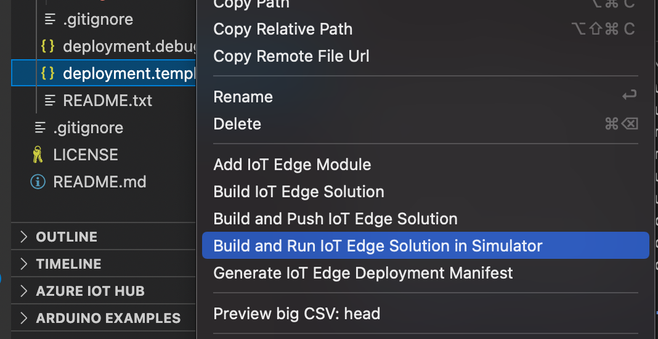

You can test this out on the Simulator

Deploy setup

In order to deploy this to Azure Percept, IoT Hub device -> Set Modules -> Add -> IoT Edge Module

Fill in Module Name and Image URI.

Also the Container Create Options to map the correct port.

Specify the Routes. In this case we have to push data coming from Azure Eye Module To Arduino Module.

Here’s what the mapping would look like

"$edgeHub": {

"properties.desired": {

"routes": {

"AzureSpeechToIoTHub": "FROM /messages/modules/azureearspeechclientmodule/outputs/* INTO $upstream",

"AzureEyeModuleToArduinoModule": "FROM /messages/modules/azureeyemodule/outputs/* INTO BrokeredEndpoint(\"/modules/ArduinoModule/inputs/input1\")",

"ArduinoModuleToIoTHub": "FROM /messages/modules/ArduinoModule/outputs/* INTO $upstream"

},

Here’s an explainer video:

Summary

Keeping workers safe is very important in any job site. Azure Percept can help with people detection and connection with different alerting systems. We’ve demonstrated setting up the Azure Percept Dev Kit to use people detection. We’ve connected Arduino with a buzzer to trigger an audible sound. This actually enables Azure Percept Dev Kit to expand capabilities and broaden use cases. Let me know if this blog helped you in any way by commenting below, I am interested in learning different ideas and use cases.

Resources

Reference Code:

https://github.com/rondagdag/arduino-percept

Getting Started With Azure Percept:

https://docs.microsoft.com/azure/azure-percept/

Join the adventure, Get your own Percept here

Get Azure

https://www.azure.com/account/free

Purchase Azure Percept

Available to customers – Build your Azure Percept

Ron Dagdag

Lead Software Engineer

During the day, Ron Dagdag is a Lead Software Engineer with 20+ years of experience working on a number of business applications using a diverse set of frameworks and languages. He currently supports developers at Spacee with their IoT, Cloud and ML development. On the side, Ron Dagdag is an active participant in the community as a Microsoft MVP, speaker, maker and blogger. He is passionate about Augmented Intelligence, studying the convergence of Augmented Reality/Virtual Reality, Machine Learning and the Internet of Things. https://www.linkedin.com/in/rondagdag/

Posted at https://sl.advdat.com/3mTr3xq